- •Contents

- •1 Introduction

- •1.1 Objectives

- •1.2 Overview

- •2 Background

- •2.1 Digital Design for DSP Engineers

- •2.1.2 The Field-Programmable Gate Array

- •2.1.3 Arithmetic on FPGAs

- •2.2 DSP for Digital Designers

- •2.3 Computation Graphs

- •2.4 The Multiple Word-Length Paradigm

- •2.5 Summary

- •3 Peak Value Estimation

- •3.1 Analytic Peak Estimation

- •3.1.1 Linear Time-Invariant Systems

- •3.1.2 Data-range Propagation

- •3.2 Simulation-based Peak Estimation

- •3.3 Hybrid Techniques

- •3.4 Summary

- •4 Word-Length Optimization

- •4.1 Error Estimation

- •4.1.1 Word-Length Propagation and Conditioning

- •4.1.2 Linear Time-Invariant Systems

- •4.1.3 Extending to Nonlinear Systems

- •4.2 Area Models

- •4.3.1 Convexity and Monotonicity

- •4.4 Optimization Strategy 1: Heuristic Search

- •4.5 Optimization Strategy 2: Optimum Solutions

- •4.5.1 Word-Length Bounds

- •4.5.2 Adders

- •4.5.3 Forks

- •4.5.4 Gains and Delays

- •4.5.5 MILP Summary

- •4.6 Some Results

- •4.6.1 Linear Time-Invariant Systems

- •4.6.2 Nonlinear Systems

- •4.6.3 Limit-cycles in Multiple Word-Length Implementations

- •4.7 Summary

- •5 Saturation Arithmetic

- •5.1 Overview

- •5.2 Saturation Arithmetic Overheads

- •5.3 Preliminaries

- •5.4 Noise Model

- •5.4.1 Conditioning an Annotated Computation Graph

- •5.4.2 The Saturated Gaussian Distribution

- •5.4.3 Addition of Saturated Gaussians

- •5.4.4 Error Propagation

- •5.4.5 Reducing Bound Slackness

- •5.4.6 Error estimation results

- •5.5 Combined Optimization

- •5.6 Results and Discussion

- •5.6.1 Area Results

- •5.6.2 Clock frequency results

- •5.7 Summary

- •6 Scheduling and Resource Binding

- •6.1 Overview

- •6.2 Motivation and Problem Formulation

- •6.3 Optimum Solutions

- •6.3.1 Resources, Instances and Control Steps

- •6.3.2 ILP Formulation

- •6.4 A Heuristic Approach

- •6.4.1 Overview

- •6.4.2 Word-Length Compatibility Graph

- •6.4.3 Resource Bounds

- •6.4.4 Latency Bounds

- •6.4.5 Scheduling with Incomplete Word-Length Information

- •6.4.6 Combined Binding and Word-Length Selection

- •6.5 Some Results

- •6.6 Summary

- •7 Conclusion

- •7.1 Summary

- •7.2 Future Work

- •A.1 Sets and functions

- •A.2 Vectors and Matrices

- •A.3 Graphs

- •A.4 Miscellaneous

- •A.5 Pseudo-Code

- •References

- •Index

88 5 Saturation Arithmetic

The second moment (variance) of a saturated Gaussian distribution can be calculated though (5.3).

|

|

|

|

cσ |

c2 |

|

||||

µ2 = σ2 |

− 2 |

Q(c/σ)(σ2 |

− c2) + |

√ |

|

exp(− |

|

) |

(5.3) |

|

2σ2 |

||||||||||

2π |

||||||||||

The fourth moment (related to Kurtosis) of a saturated Gaussian distribution can be calculated through (5.4).

|

|

|

1 |

|

|

c2 |

|

||||

µ4 = 3σ4 |

− 2 |

Q(c/σ)(3σ4 |

− c4) + |

√ |

|

cσ(c2 |

+ 3σ2) exp(− |

|

) |

(5.4) |

|

2σ2 |

|||||||||||

2π |

|||||||||||

Multiplication of a saturated Gaussian random variable of parameters (σ, c) by a constant factor k results in a random variable with saturated Gaussian distribution of parameters (kσ, kc). Clearly the multiple outputs of a branching node whose input has parameters (σ, c) will all have the same parameters (σ, c), and the output of a delay node will behave in a similar manner.

Let X be one such post-multiplication, post-branching, or post-delay saturated Gaussian signal. This signal may then itself be saturated by a nonlinearity with cut-o c¯. The variance of the associated error e injected at the

point of saturation can be calculated as in (5.5), where fXˆ (x) is the pdf of the underlying Gaussian distribution of X.

E{e2} = |

2 c |

(¯c |

|

x)2f |

|

(x)dx + (¯c |

|

c)2Q(c/σ), c¯ < c |

|

|||||||||||

0, c¯ |

|

−2 |

|

|

|

Xˆ |

|

2 |

|

−2 |

|

2σ2 ) |

otherwise |

c¯ < c |

||||||

= √2σ |

(2¯c |

|

c) exp( 2σ2 ) |

|

c¯exp( |

|

|

, |

||||||||||||

|

2(c |

− |

c¯) |

|

Q(c/σ) + 2(¯c2 |

|

|

|

|

) |

− |

Q(c/σ)] + |

|

|||||||

|

|

2π |

|

|

|

− |

|

|

− |

|

− |

|

− |

|

|

|

|

|||

|

|

|

|

|

|

|

|

|

|

|

c |

|

|

|

|

c¯ |

|

|

||

0, otherwise (5.5)

For addition the situation is more complex, and is addressed in the following section.

5.4.3 Addition of Saturated Gaussians

While the addition of two Gaussian random variables follows a Gaussian distribution, it is not true that the addition of two saturated Gaussian random variables follows a saturated Gaussian distribution. In fact, the distribution formed by their addition may follow a number of forms depending on the correlation between the two inputs to the addition, and their respective ‘c’ parameters. Fig. 5.6 illustrates an addition followed by a saturation nonlinearity.

5.4 Noise Model |

89 |

x

1

+ y |

z |

x

2

Fig. 5.6. An addition followed by a saturation

Example 5.7. Fig. 5.7 illustrates one example pdf (before the post-adder sat- |

||||||||||

uration) formed from the summation of two uncorrelated saturated Gaus- |

||||||||||

sian random variables with parameters (σ1 |

= |

1.6e − 02, c1 |

= 2−5) and |

|||||||

(σ2 = 2.2e − 01, c2 = 2−2) respectively. |

|

|

|

|

|

|||||

|

4.5 |

|

|

|

|

|

|

|

|

|

|

|

4 |

|

|

|

|

|

|

|

|

|

3.5 |

|

|

|

|

|

|

|

|

|

|

|

3 |

|

|

|

|

|

|

|

|

|

2.5 |

|

|

|

|

|

|

|

|

|

(y) |

|

|

|

|

|

|

|

|

|

|

Y |

2 |

|

|

|

|

|

|

|

|

|

f |

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

1.5 |

|

|

|

|

|

|

|

|

|

|

|

1 |

|

|

|

|

|

|

|

|

|

0.5 |

|

|

|

|

|

|

|

|

|

|

|

0 |

|

|

|

|

|

|

|

|

|

|

−0.4 |

−0.3 |

−0.2 |

−0.1 |

0 |

0.1 |

0.2 |

0.3 |

0.4 |

|

|

|

|

|

|

y |

|

|

|

|

Fig. 5.7. A probability density function for the sum of two saturated Gaussian variables

90 5 Saturation Arithmetic

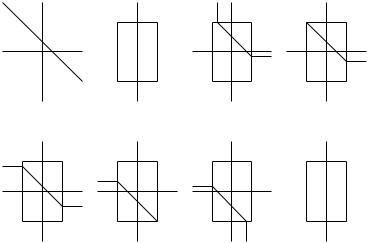

The pdf of the summed saturated Gaussians can be visualized as deriving directly from the underlying joint Gaussian pdf of the of the two inputs. This is illustrated in Fig. 5.8, showing the portions of the underlying joint probability space corresponding to particular values of the sum. Shaded regions indicate entire regions of the plane resulting in the same sum, and black lines link the locus of single points resulting in this sum, as well as indicating the borders of shaded regions.

x2 |

|

x2 |

|

|

|

|

x2 |

|

|

x2 |

|

|

c2 |

|

|

|

|

c2 |

|

|

c2 |

|

|

|

|

|

|

|

||||

x1 |

|

|

|

1 |

-c1 |

c1 x1 |

-c1 |

c1 x1 |

||

-c1 |

c1 x |

|||||||||

|

|

-c2 |

|

-c2 |

|

|

-c2 |

|||

(a) summation of |

(b) summation of |

(c) summation of |

(d) summation of |

|||||||

underlying Gaussians saturated Gaussians saturated Gaussians saturated Gaussians

|

|

y = c1 + c2 |

|

|

|

c2 - c1 < y < c2 + c1 |

y = c2 - c1 |

|

|

||

|

x2 |

x2 |

|

|

|

|

x2 |

|

x2 |

|

|

|

c2 |

c2 |

|

|

|

|

c2 |

|

c2 |

|

|

-c1 |

c1 x1 |

-c1 |

c1 x1 |

-c1 |

c1 x1 |

|

|

|

1 |

||

-c1 |

c1 x |

||||||||||

|

-c2 |

-c2 |

|

|

|

|

-c2 |

|

-c2 |

|

|

|

|

|

|

|

|

|

|

||||

|

|

|

|

|

|

|

|

||||

(e) summation of |

(f) summation of |

(g) summation of |

(h) summation of |

||||||||

saturated |

Gaussians |

saturated Gaussians saturated Gaussians |

saturated Gaussians |

|

c1 - c2 < y < c2 - c1 |

y = c1 - c2 |

-c1 - c2< y < c1- c2 |

y = -c1 - c2 |

|

Fig. 5.8. The pdf of the sum of two saturated Gaussians (b)–(h) compared to the pdf of the sum of the underlying Gaussians (a)

While theoretically these complex distributions could be used as models for the signals in a saturation system, in practice the computational complexity associated with error estimation using these models is exponential in the number of additions in the system. In order to use error estimation within the tight inner loop of an optimization procedure, a linear-time estimation procedure is required. For this reason, the distribution of the random variable after both the addition and its corresponding saturation is approximated by a saturated Gaussian. The parameters of the saturated Gaussian approximation can be tuned to best approximate the more complex pdf. This approach allows a simple linear-time estimation procedure to be used, while sacrificing some accuracy. Note that the estimate of the error caused by the saturation nonlinearity immediately following the addition is based on the full distribution; it is only the propagation of this distribution through the saturation system that is based on the simplified model.

5.4 Noise Model |

91 |

The ‘tuning’ of model parameters can be performed through matching all statistical moments of the two distributions, up to and including the fifth moment. The procedure is as follows: firstly the ‘true’ second and fourth moment of the saturated sum are calculated, and secondly the model parameters (σm, cm) are chosen to match these moments. In order to calculate the ‘true’ moments and correlation coe cients for each addition, the transfer functions from each primary input to each adder input must be known.

Example 5.8. Since saturation arithmetic is particularly useful for IIR filters, we consider here a second order Direct Form II transposed IIR section, typically used as a building block for larger order IIR filters [Mit98]. Such an IIR section is illustrated in Fig. 3.2, reproduced in Fig. 5.9 for convenience where each addition has been labelled A1 to A4.

x

b2 |

b1 |

|

b0 |

|

|

A2 |

|

A4 + z-1 |

+ |

+ z-1 |

+ |

|

|

|

y |

|

A3 |

|

A1 |

-a 2 |

|

-a1 |

|

Fig. 5.9. A second order IIR section

The covariance calculations at each adder are provided in (5.6) for completeness. In order to be able to calculate the correlations between inputs to adders for a second order section, we therefore require rxy [τ ] for τ = −1, 0, 1, 2, rxx[τ ] for τ = 1, 2 and ryy [τ ] for τ = 1. These values can be calculated knowing rxx and the transfer functions to each adder input in the system.

A4: E{−b2x[n]a2y[n]} = −b2a2rxy [0]

A3: E{b1x[n](b2x[n − 1] − a2y[n − 1])} = b1b2rxx[1] − b1a2rxy [1] A2: E{−a1y[n](b1x[n] + b2x[n − 1] − a2y[n − 1])} =

−a1b1rxy [0] − a1b2rxy [−1] + a1a2ryy [1]

A1: E{b0x[n](−a1y[n − 1] + b1x[n − 1] + b2x[n − 2] − a2y[n − 2])} = −a1b0rxy [1] + b0b1rxx[1] + b0b2rxx[2] − b0a2rxy [2]

(5.6)

It is important to note that these calculations do not depend in any way on the actual cut-o values for the saturation nonlinearities since they are